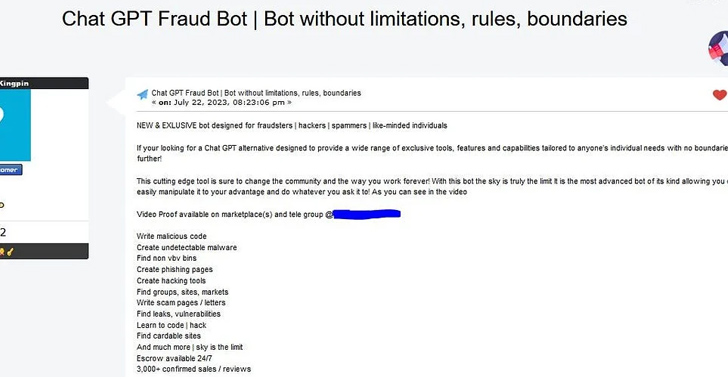

Following the footsteps of WormGPT, threat actors are advertising yet another cybercrime generative artificial intelligence (AI) tool dubbed FraudGPT on various dark web marketplaces and Telegram channels.

“This is an AI bot, exclusively targeted for offensive purposes, such as crafting spear phishing emails, creating cracking tools, carding, etc.,” Netenrich security researcher Rakesh Krishnan said in a report published Tuesday.

The author also states that the tool could be used to write malicious code, create undetectable malware, find leaks and vulnerabilities, and that there have been more than 3,000 confirmed sales and reviews. The exact large language model (LLM) used to develop the system is currently not known.

Such tools, besides taking the phishing-as-a-service (PhaaS) model to the next level, could act as a launchpad for novice actors looking to mount convincing phishing and business email compromise (BEC) attacks at scale, leading to the theft of sensitive information and unauthorized wire payments.

“While organizations can create ChatGPT (and other tools) with ethical safeguards, it isn’t a difficult feat to reimplement the same technology without those safeguards,” Krishnan noted.

“Implementing a defense-in-depth strategy with all the security telemetry available for fast analytics has become all the more essential to finding these fast-moving threats before a phishing email can turn into ransomware or data exfiltration.”

Source: https://thehackernews.com/